AI Customer Support Strategy: What to Automate and What to Keep Human

AI customer support strategy is a framework for deciding where AI can assist, where it must escalate, and how support teams keep responses accurate, policy-aligned, and safe at scale.

Most teams don’t fail because their AI isn’t smart enough. They run into trouble when an embedded assistant gets treated like a safe replacement for judgment. In customer support, a confident wrong answer is expensive. It can trigger refunds, break policy, and quietly damage trust. That’s why an AI customer support strategy matters more than turning on one more AI feature.

The real question is who gets to make the call, and under what rules. This guide shows where automation is low-risk, where it needs review, and how to keep outcomes consistent with Think → Verify → Act, guardrails against hallucinations, and handoffs that preserve context so customers don’t have to repeat themselves.

What "AI Customer Support Strategy" Really Means in 2026

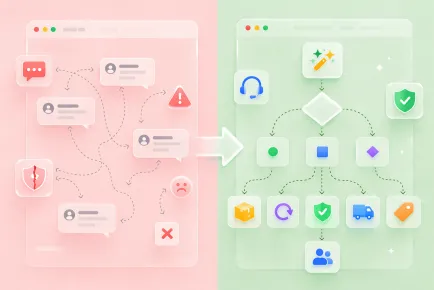

Strategy often becomes shorthand for just turning it on. One misconception is that you can plug an assistant into your helpdesk, point it at a knowledge base, and call it done. The other is the opposite extreme: if the assistant can answer, it should answer everything. Both approaches usually break on the same kinds of requests: real customer questions that involve judgment, exceptions, or policy nuance.

A solid AI customer experience strategy is much more specific. It clarifies where the assistant participates and where it is not allowed to make the call. It also sets quality rules, so answers stay grounded as policies, products, and edge cases change.

If you can’t explain those basics in plain language, you don’t have a strategy yet. You have a feature turned on and hope it does the rest.

AI Chatbots, AI Assistants, AI Agents, Copilots: Roles Without Confusion

These labels get mixed up easily, and that’s how teams give a tool more autonomy than it should have. To keep terms clear, the table below compares each role by allowed autonomy and the failures teams see most often.

| Role | Allowed autonomy | Typical failures |

|---|---|---|

| Chatbot (scripts/FAQ) | Answers only what you prewrite and approve. | Dead ends outside the script; frustrating loops. |

| AI Assistant (embedded AI) | Built into Shopify or Magento as an AI feature. Summarizes, drafts, classifies, suggests next steps. | Misses store-specific nuance; drafts that ignore edge cases; inconsistent tone without guidance. |

| AI chatbot (generated answers) | Generates replies based on provided sources and conversation context. | Hallucinations; overpromising; outdated policy references. |

| AI agent (actions + workflow) | Takes actions across systems based on rules and approvals. | Wrong actions at scale; compliance issues; costly mistakes without guardrails. |

| Copilot (for agents) | Assists a human agent with drafts and suggestions; a person decides what’s sent or done. | Over-trust; copy-paste errors; weak gains if context is poor. |

When the autonomy level is wrong, mistakes scale faster than the support queue does. The risk isn’t the label. It’s unclear responsibility.

Customer Support Automation: Where AI Helps and Where It Shouldn't Decide

Not every support task is equally automatable. The safest wins come from workflows where the assistant can’t accidentally promise money, bend policy, or trigger an irreversible action.

A simple rule works well: automate what’s repetitive and verifiable, and keep a human in the loop when the request involves judgment, exceptions, or a real commitment.

High-value, low-risk automation

These are tasks where speed matters and the answer can be grounded in approved sources.

- FAQ and basic policy questions (when the policy text is current)

- Data collection (order number, email, photos, reason)

- Classification & routing (intent tagging, priority)

- Summaries & drafts (ticket recap, draft reply for review)

High-risk automation (requires control)

These requests look simple, but one missing detail can change the correct outcome.

- Returns (eligibility edge cases, deadlines)

- Cancellations (fulfillment stage, fraud checks)

- Delivery promises (backorders, warehouse constraints)

- Compensation (credits, replacements, shipping refunds)

What should never be fully autonomous

If the outcome affects money, legal exposure, or policy exceptions, the system should default to a controlled handoff.

- Money (refunds, credits, discounts)

- Legal commitments (warranties, guarantees, compliance statements)

- Policy exceptions (manual overrides)

Risk Matrix

Use a simple matrix to decide what the assistant is allowed to do per intent. It keeps automated customer support consistent across agents, shifts, and busy seasons. Read it as a default path: auto-reply for low-risk intents, hand off anything that needs judgment, and block or escalate critical cases.

| Intent / task | Risk level | Allowed automation | Required sources / policies |

|---|---|---|---|

| Where is my order? | Low | Auto-reply allowed | Shipping policy + tracking source |

| Change shipping address | Medium | Handoff | Order edit rules + verification steps |

| Cancel my order | High | Handoff / block if uncertain | Cancellation policy + payment status |

| Refund request | High | Handoff only | Refund policy + eligibility rules + order facts |

| Discount request | High | Block + alternate flow | Discount policy + escalation rules |

| Warranty / legal claim | Critical | Block + immediate handoff | Legal policy + human approval |

If an intent isn’t mapped yet, default to a handoff, then add it once the policy and sources are clear.

AI vs Human Customer Service: Draft the Answer, Approve the Decision

When an assistant is embedded into your support workflow, it’s tempting to treat it like a faster agent. But speed is not the same as responsibility. A generated reply can sound polished and still be wrong in the one place that matters: what it commits your business to do next.

The safer model is simple: let AI do the heavy lifting, but keep decisions accountable. Use generative AI customer support for analysis and drafting, not for independent commitments.

The assistant can read the thread, pull relevant details, check approved sources, and propose a response with reasoning. A human then approves what gets sent or routes the case when it touches money, policy exceptions, or anything legally sensitive. Think of it as a draft and an editor. You still move faster, but you move with guardrails.

Shareable rule of thumb: AI should draft the answer. Your team should approve the decision.

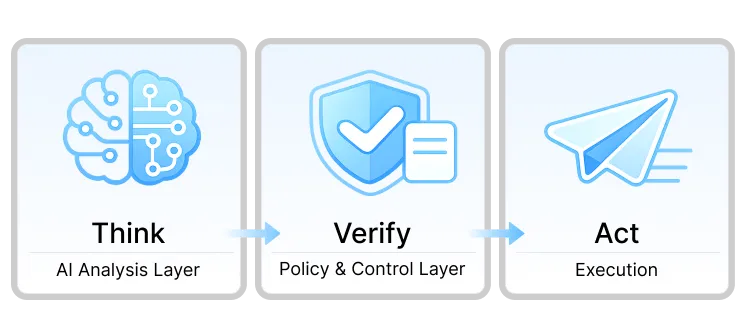

AI Customer Support Architecture: Think → Verify → Act

You don’t need an assistant that can send messages on its own. You need a workflow that separates analysis from approval and keeps execution under control. In practice, this is an AI customer service implementation that helps you move faster on routine work without turning edge cases into customer-facing promises.

Think: AI analysis layer

Think happens behind the scenes. The assistant reads the message and ticket history, identifies intent, pulls relevant details from approved sources, and drafts a reply with reasoning, confidence, and risk flags. It’s preparation, not a promise, so it shouldn’t be the layer that speaks to the customer.

Verify: policy & control layer

Verify is the checkpoint. The draft gets compared against your business rules and the sources it relies on. Then the workflow chooses one outcome:

- Safe auto-reply (low-risk intents with solid sourcing)

- Handoff to a human (judgment, exceptions, missing context)

- Block + alternate flow (money, legal, unclear requests)

Act: execution

Act is the controlled output. A safe case gets a policy-aligned response. Anything that needs a person is routed with the key context already packaged, so the agent can continue without re-reading the whole thread. Blocked cases follow a fallback path, like collecting missing details or escalating to a specialist.

What this looks like in a real team

Day to day, the assistant reviews the full history, checks documentation, and produces a short summary plus a draft reply. A human reviews it, adjusts for nuance, and sends the final response. If the draft misses the mark, the agent asks for a tighter pass and iterates until it’s accurate and usable.

The point isn’t that AI never makes mistakes. It’s that mistakes get caught before they become customer-facing commitments, while your team saves time on reading and first drafts.

How to Prevent AI Hallucinations Before They Reach Customers

In support, a made-up detail can turn into a promise a customer will hold you to. The goal is simple: keep unreliable answers from reaching customers, especially when a request touches refunds, delivery commitments, or policy exceptions.

1. Response policy (must-have)

Start with a short rulebook the assistant follows every time:

- What it is allowed to do (draft, summarize, ask clarifying questions, cite approved sources)

- What it must never do (invent steps, guess policy, promise outcomes it can’t verify)

- When it must escalate (money, legal commitments, exceptions, low confidence)

2. Guardrails that actually work

Guardrails only help when they match real workflow. This is where AI in customer service becomes dependable:

- Grounding on approved sources (knowledge base, policy pages, order data, tracking)

- Confidence thresholds (below the threshold, no auto-reply)

- Risk flags that force control (money, delivery promises, legal language)

3. Simple hallucination QA checklist

Before you expand automation to a new intent, check the most common drift triggers:

- Gaps in your knowledge base (nothing reliable to ground on)

- Conflicting policies (rules that disagree or "exceptions" that aren’t documented)

- Edge cases (partial shipments, backorders, bundles, one-off exceptions)

If you treat hallucinations as a workflow problem instead of a model problem, you’ll fix them faster and with far fewer customer-facing mistakes.

How AI Transfers Context to Humans

Sooner or later, a case needs a person. A smooth handoff feels like the same conversation continuing, not a restart.

Trust breaks when customers repeat themselves, details get lost between systems, or the next agent contradicts what the assistant said earlier. The moment a customer thinks the assistant promised something, you’re no longer solving a ticket, you’re repairing credibility.

To avoid that, every handoff should include a small, consistent context packet. This is what turns conversational AI into a real workflow upgrade instead of an extra layer in the middle:

- Intent + confidence (what the customer is trying to do, and how sure the system is)

- Extracted fields (order number, email, product, dates, shipping status, reason)

- AI summary (one-paragraph recap of what happened so far)

- Sources used (which policies or KB pages were referenced)

- Risk flags (money, delivery commitments, legal, policy exception)

To preserve trust, the handoff message itself matters. When you escalate, signal continuity and avoid language that sounds like starting over. A simple line like this usually works well: "Our specialist already has the details and will continue from here." If you need the customer to provide something, be specific about what’s missing and why, so it feels like progress, not friction.

Customer service automation: Metrics to Track

It’s easy to get excited about the number of tickets handled and miss what actually matters: did the customer get the right outcome, with the right level of confidence?

These metrics show whether you’re improving quality and trust, not just moving conversations off the queue:

- CSAT after AI interactions: Track satisfaction specifically for AI-assisted threads, not just your overall CSAT.

- Escalation reasons: Tag why a case was handed off (missing data, policy exception, low confidence, money-related request) so you know what to fix next.

- Blocked AI decisions: Track when guardrails stop an unsafe reply or action, then review the top triggers to refine rules and sources.

- Resolution time after handoff: Measure whether the context packet helps agents finish faster without making customers repeat themselves.

- Agent time saved: Estimate time reduced on reading long threads, searching policies, and drafting first replies.

Vanity metrics can still help, but only alongside quality signals:

- Deflection without CSAT: Fewer tickets means nothing if customers leave frustrated or uncertain.

- Speed without correctness: Fast answers that trigger follow-ups, refunds, or policy conflicts are negative progress.

If these move in the right direction together, you’re building a system that stays accurate under pressure and escalates safely when it should.

AI support roadmap: A Realistic 30-Day Rollout Plan

You don’t need a perfect system on day one. You need a safe sequence that starts with control, proves reliability, and only then expands autonomy.

Week 1: Policies + KB foundation

- Focus: Response policy, high-risk intents, clean approved sources.

- Gate: Policy approved and sources list finalized.

Week 2: AI analysis only (no auto-replies)

- Focus: Intent + extracted fields + draft with reasoning, confidence, and risk flags. Humans send all replies. Log failures.

- Gate: A target share of drafts is usable with minor edits (often ~60–70% as an early goal).

Week 3: Safe intents + controlled handoff

- Focus: Auto-replies only for low-risk intents. Medium/high risk routes to humans with a context packet. Critical intents are blocked with an alternate flow.

- Gate: CSAT stays stable on low-risk auto-replies, and escalation reasons are clear.

Week 4: Copilot + routing optimization

- Focus: Copilot inside the agent workspace, routing tuned from logs, safe intents expanded one category at a time.

- Gate: Metrics stay stable (CSAT steady, resolution time improves, blocked decisions trend down, agents report time saved).

If you miss a gate, don’t push forward. Fix the policy, sources, or routing first, then expand again.

Conclusion

At this point, the pattern is clear. Most embedded assistants fail not because they can’t write a decent reply, but because the workflow lets them commit to outcomes without enough control. With an AI customer support strategy, you keep the assistant focused on research and drafts, then verify anything that carries risk before it reaches the customer.

If you want a practical next step, pick a few low-risk intents, write a one-page response policy, and start measuring CSAT and escalation reasons right away. From there, expand autonomy only when the rules, sources, and handoffs stay reliable under real volume.

Frequently Asked Questions

What are the best AI tools for customer support in 2026?

There isn’t one "best" tool for every team, so it helps to compare capabilities that keep automation safe. Prioritize workflow fundamentals: grounding on approved sources, confidence or risk signals, and clean handoffs that preserve context. Extras like tone controls or multilingual support matter only after those basics are solid.

AI chatbot for customer service vs embedded assistant: what’s the difference?

An embedded assistant works inside the workflow (drafts, summaries, routing). A customer-facing bot talks to shoppers directly, so it needs stricter boundaries and predictable escalation for anything order-specific or risky.

What metrics to track for customer service AI?

Start with quality signals, not volume: CSAT after assisted interactions, escalation reasons, and blocked risky decisions. Then watch whether handoffs get faster and agents spend less time searching and drafting.

What are the AI customer service best practices for preventing hallucinations?

Begin with analysis and drafting, not auto-replies. Define no-go zones (money, legal, exceptions), require verification for anything risky, and expand scope only when results stay stable on low-risk intents.

Learn how to automate support with flows, routing, segments, and SLA logic across WISMO, returns, cancellations, and damage claims.

A practical guide to choosing a no-cost chat: what matters on free tiers, how to set it up, and when it's time to upgrade.

A practical guide to free customer support on Shopify: pricing models, feature snapshots, scaling limits, and what to use when Inbox is not enough.

Start free and put all your stores, tickets, and chats under one helpdesk you control.